|

|

3 years ago | |

|---|---|---|

| Diffusion | 3 years ago | |

| Diffusion.xcodeproj | 3 years ago | |

| DiffusionTests | 3 years ago | |

| DiffusionUITests | 3 years ago | |

| config | 3 years ago | |

| .gitignore | 3 years ago | |

| LICENSE | 3 years ago | |

| README.md | 3 years ago | |

| screenshot.jpg | 3 years ago | |

README.md

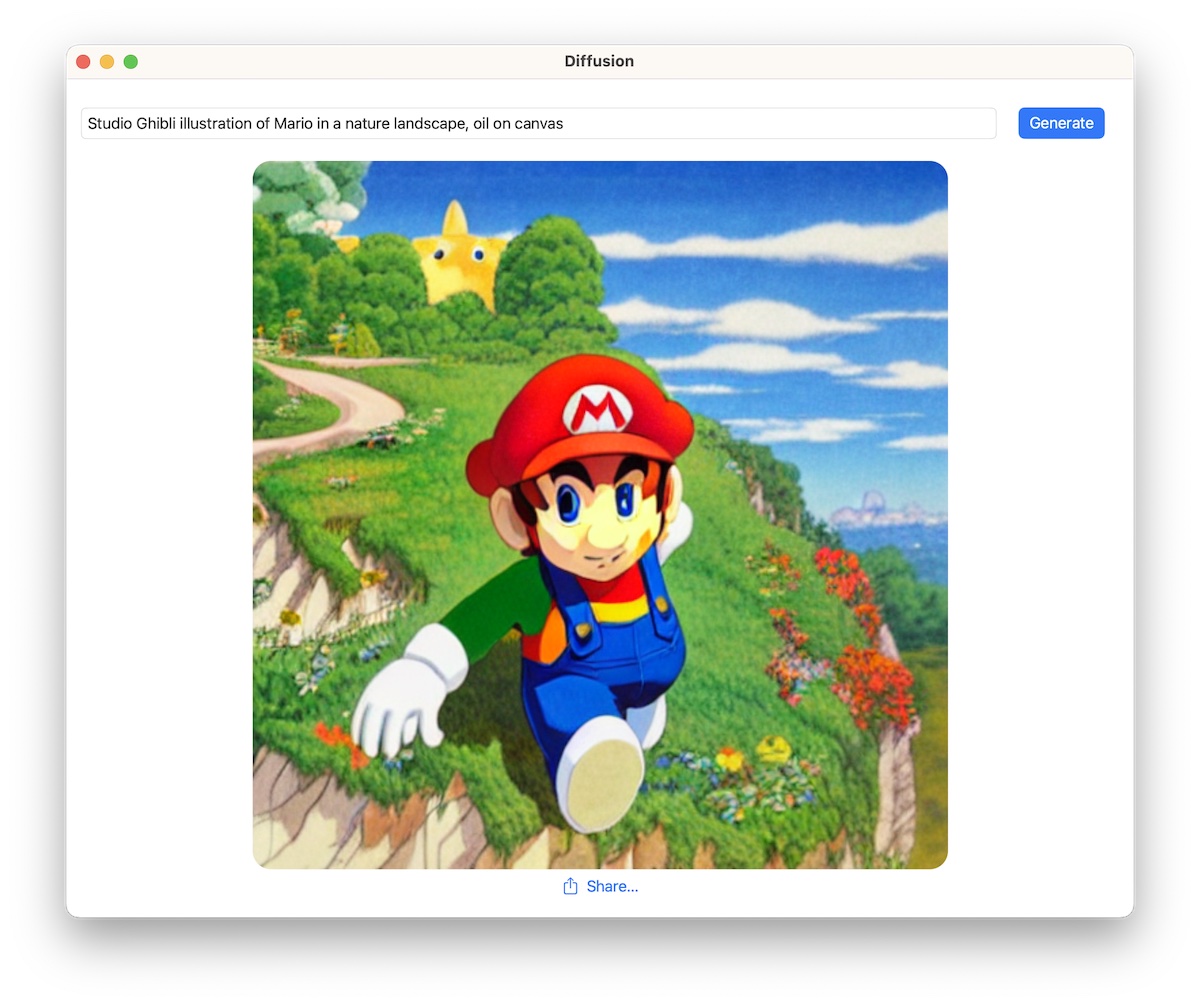

Diffusion

This is a simple app that shows how to integrate Apple's Core ML Stable Diffusion implementation in a native Swift UI application. It can be used for faster iteration, or as sample code for other use cases.

On first launch, the application downloads a zipped archive with a Core ML version of Runway's Stable Diffusion v1.5, from this location in the Hugging Face Hub. This process takes a while, as several GB of data have to be downloaded and unarchived.

For faster inference, we use a very fast scheduler: DPM-Solver++ that we ported to Swift. Since this scheduler is still not available in Apple's GitHub repository, the application depends on the following fork instead: https://github.com/pcuenca/ml-stable-diffusion. Our Swift port is based on Diffusers' DPMSolverMultistepScheduler, with a number of simplifications.

Compatibility

- macOS Ventura 13.1, iOS/iPadOS 16.2, Xcode 14.2.

- Performance (after initial generation, which is slower)

- ~10s in macOS on MacBook Pro M1 Max (64 GB).

- ~1 min 15s in iPhone 14 Pro.

How to Build

If you clone or fork this repo, please update common.xcconfig with your development team identifier. Code signing is required to run on iOS, but it's currently disabled for macOS.

Limitations

- The UI does not expose a way to configure the scheduler, number of inference steps, or generation seed. These are all available in the underlying code.

- A single model (Stable Diffusion v1.5) is considered. The Core ML compute units have been hardcoded to CPU and GPU, since that's what gives best results on my Mac (M1 Max MacBook Pro).

- Sometimes generation returns a

nilimage. This needs to be investigated.

Next Steps

- Improve UI. Allow the user to select generation parameters.

- Allow other models to run. Provide a recommended "compute units" configuration based on model and platform.

- Implement other interesting schedulers.

- Implement negative prompts.

- Explore other features (image to image, for example).